AI tools are changing *how* Scrum Teams operate. Scrum’s efficacy remains intact. Scrum, as an Empirical process control theory, is more valuable than ever in an AI-accelerated world of work.

Panic headlines scream “Scrum is obsolete!”

No. There’s no evidence of that. Just the opposite, in fact.

What *has* changed are team dynamics, cognitive load, skill fluidity. But Scrum’s guardrails and feedback loops matter *more* than ever as AI amplify human output.

The Unchanging Core

Scrum Teams are cross-functional, self-managing, and capable of converting Product Backlog into releasable functionality every Sprint. Scrum proves that empiricism (i.e., transparency, inspection, adaptation) is among the best strategies to navigate complex problems. And AI doesn’t eliminate complexity, it accelerates it.

Examples

- Sprints are time-boxed experiments.

- This remains unchanged.

- The Daily Scrum is a brief dialogue among developers who inspect their progress and adapt their daily plan toward the Sprint Goal

- This remains unchanged.

- Retrospectives are opportunities for honest reflection and deliberate evolution of working agreements.

- This remains more important than ever.

- Workflows, skills, and techniques are changing rapidly as new AI tools reach the market. A team that does not frequently reflect on these mutations may get stuck in a perpetual loop of forming → storming → reforming ⟳.

The Definition of Done is Your Transparency Firewall

Scrum Teams, in their Definition of Done, can earn stakeholder’s trust and improve shared understanding by explicitly declaring how AI-generated material is used, verified, and integrated. “AI-assisted code must pass human review & human-authored tests” — this isn’t optional. It’s the new baseline and without this working agreement, teams get invisible technical debt.

Transparency isn’t “nice to have”…it’s survival.

Example Definitions of Done

Example 1

Before

Our system is in production, all unit, e2e, and load tests passing, handles 5000 concurrent users, works on all browsers with 2% market share or higher in North America, on mobile iOS and Android devices with screen sizes 320 pixels or wider, is not behind more than one minor version of any dependent module, and contains no known security vulnerabilities.

After

Our system is in production, all unit/e2e/load tests passing, handles 5000 concurrent users, works on all browsers ≥2% North American market share, on mobile iOS/Android devices ≥320px wide, ≤1 minor version behind any dependency, contains no known security vulnerabilities, and:

- AI-generated code is clearly marked / documented.

- Human review completed on all AI-generated code.

- AI-assisted code passes the same test/lint/security checks as human-written code.

- No AI model outputs in production code not covered by human-authored acceptance tests.

- Prompt + model version + date recorded for significant AI-generated sections.

Example 2

Before

A Product Backlog item is considered Done when:

- Code is written, peer-reviewed, and merged.

- Unit and integration tests are in place and pass.

- Acceptance criteria are met.

- Relevant documentation is updated.

- Changes are deployed.

After

A Product Backlog item is considered Done when:

- Code is written, peer-reviewed (including all AI-generated code), and merged.

- Unit and integration tests are in place and pass (same coverage and quality for AI-generated code).

- Acceptance criteria are met (human-authored).

- Relevant documentation is updated.

- Changes are deployed.

- AI-generated code is clearly marked / documented.

- Human review completed on all AI-generated code.

- No AI model outputs in production code not covered by human-authored acceptance tests.

- Prompt + model version + date recorded for significant AI-generated sections.

How AI Reshapes Scrum Teams — The Visible Shifts

Scrum Team Size & Composition

Given the current trends with AI tools, it appears likely executives may finally understand Scrum’s advice to keep teams small!

Executives tend to think “more people = more productivity”. But the bottleneck in knowledge work is always the pace of decision-making (i.e., bureaucracy). More people equals more bureaucracy equals slower teams. Trends indicate that, as cognitive grunt work vanishes, small teams are able to make rapid decisions and keep bureaucracy to a minimum. Whereas, pre-2024, teams of 7 to 20 composed of a Product Owner, Scrum Master, several coders, several testers, maybe a UX specialist, surrounded by SMEs. It is more common now to see teams composed of:

- 2–3 Developers (capable of owning architecture, design, code, test, in close proximity with end users and key stakeholders)

- a Product Owner (capable of owning product vision and stakeholder management, maybe one of the Developers)

- a Scrum Master (capable of keeping transparency high and clearing the path for delivery, maybe one of the Developers)

Developers Must Own Quality & Delivery

Agile engineering practices are showing their value to a formerly reluctant audience. Table stakes certainly include: continuous integration & continuous delivery (CI/CD); collective code ownership; and pair-programming or ensemble-programming. These ways-of-working are more productive than ever.

Test-Driven Development (TDD), in particular, is proving to be a crucial skill and among the most reliable ways to verify the validity of AI generated code, keep a coding agent on track, and ensure the code is always in releasable condition. TDD skeptics have become TDD hipsters (e.g., _“I was doing TDD before it was cool.”_) There’s debate still, and time will tell as techniques evolve, about the optimal size of the behaviour/unit described in tests; evidence is mounting that coding agents excel at writing and managing unit-level tests, while humans focus on describing acceptance-level tests. Without great automated acceptance tests, AI-generated code could too easily be black-box hallucinations. (Imagine something like this: humans write high-level acceptance tests in Playwright or Cypress, and let AI prove its work with unit tests in Vitest or Jest.)

Product Owner Owns the Sequence & Content of the Product Backlog

POs still own vision and are accountable for the content and sequence of the Product Backlog. Product Owners decide the sequence in which new functionality will be released to end users (i.e., prioritization) and they decide what does and does not get developed. It is in this way they maximize (or fail to maximize) the value of the work of the team.

Use AI tools to reduce workload but don’t outsource business decisions to the machines. Product Owners are now the human in the loop to ensure the Product Backlog isn’t populated with AI slop. As AI tools can suggest priorities, author entire backlogs, and draft acceptance criteria, it is more important than ever to remember that every Product Backlog Item represents first and foremost a conversation. AI can draft 50 stories in seconds, that’s not the hard part. Dialogue with developers and stakeholders is essential and the best means to achieve shared understanding about the work that needs doing.

Scrum Master Pursues Transparency, Transparency Raises the Quality of Decision-Making

Dependencies, working agreements, communication, techniques, outputs and outcomes: the Scrum Master helps make all aspects of the work transparent to the Scrum Team and their key stakeholders.

Scrum Teams embracing AI tools are apt to rapidly evolve…and it’s not going to be a smooth ride for most. If we are to take Marshall McLuhan’s advice: the form and shape of AI tools will impact teams more than the content they generate. How teams interact with, depend on, delegate to, or integrate AI (the medium) alters workflows, thinking patterns, collaboration, creativity, and culture far more than the specific content generated. And if we are to believe Alvin Toffler, teams exploring AI tools will need to welcome a pattern of rapid learning, unlearning, and relearning, to alleviate the sense of future shock.

No matter how agile, teams are apt to experience distress, discomfort, and disruption. Scrum Masters can mitigate the worst of these effects by keeping transparency high, facilitate frequent reflection and adaptation of working agreements (specifically with regard to AI-related tooling and techniques), and relentless negotiation throughout the organization to ensure their team has latitude, minimal dependencies on others outside the team, and a clear path to deliver incrementally. Teams that thrive will treat this as evolution, not crisis.

The Cost of Experimentation & Research Is Nearing $0

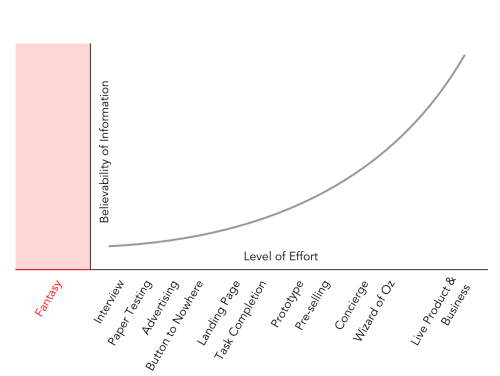

Giff Constable’s “Truth Curve” illustrates how the level of effort (_cost_) required by an experiment is inversely proportional to the believability of information produced by the experiment (_benefit_).

AI tools have drastically reduced the costs of research and working code. For example, the cost of generating a working prototype (app) is less than conducting a human-to-human interview. Adding a landing page to an existing website may be less costly than drawing a wireframe on paper. The Truth Curve may be obsolete now (or may withstand AI’s influence to reveal profound insights about the believability of user feedback when AI generated content forms the experimental material).

But one thing for certain: this new reality presents Scrum Teams with incredible opportunities to shorten the build → measure → learn loop. What took days or weeks in 2022 might take minutes now. Scrum Teams must therefore be disciplined about the experiments they conduct. Skip “inside-the-building” debates; jump instead to cheap, high-truth tests (AI-spun prototypes, mock APIs).

Documentation

Document proliferation has long been a problem, but is amplified when the effort required to produce documents nears zero.

Scrum’s design suggests that pre-implementation artifacts are disposable, changeable, and lightweight; while post-implementation artifacts are version-controlled and audience-specific. It is time for Scrum Teams to double down on this to avoid an AI-generated explosion of shelfware.

Which Documents are Valuable?

- Pre-2025: The source code and test cases.

- Post-2025: Just the test cases.

All other documents are disposable or can be rendered from existing artifacts (e.g., render the roadmap directly from the Product Backlog; render the Product Backlog directly from the Product Goal; render the source code directly from the test cases; and so on).

Summary

Compose small teams of people with complementary skills who can make decisions rapidly. Sharpen the Definitions of Done across teams with AI-specific agreements. Rearrange experiments per the Truth Curve. Delete all disposable docs. Embrace TDD & pairing.

Enjoy!