Photo by Sergey Nivens-Adobe Stock

Real-life risk-taking is different from theory

If someone flips a coin 99 times and gets tails each time, what’s their probability of getting heads on the 100th flip?

In theory, it’s still 50%. Because it’s a random event¹, and the previous outcomes have no impact on the next one.

However, if that were to happen in real life in front of your eyes, would you still think the odds are the same? You probably wouldn’t. Especially if you have your money or life at stake.

So, why does that happen? Because reality is messier than theory. We do not live in a laboratory or a controlled environment. In real life, small unknown variations can have a huge impact on the result. And all information required to make the “best” decision will rarely be available. Believing that the rules from purely hypothetical models apply to real life is often followed by disappointment.²

For instance, in the coin-flipping example, any sensible individual would think there must be something rigged in the game. We would sense that there’s missing information, and this creates a discrepancy between the expected outcome and the observed one. We may not know what this information is, and we don’t need to. We would just adapt our risk-taking strategy accordingly.

Long story short, in real-life risk-taking situations “experience trumps theory”. It can be argued that Bayesian probability³, which suggests that we should update our beliefs according to previous results even for random-looking events, was developed to partially explain these heuristics.

In this article, we will discuss a few principles of real-life risk-taking and ultimately how the Scrum framework can support them.

- Perfect is the enemy of good

- Not all risks are equal

- Favour the risks that benefit from uncertainty

- Decision-makers should have skin in the game

1. Perfect is the enemy of good

Focusing on the most practical option available rather than seeking the perfect solution is called the heuristic technique. Heuristics work under defective models. This is significant because any model that’s designed as an abstraction of the real world is somewhat defective.

To illustrate, if you measure the height of a child and have a defective ruler, it can still indicate if the child is growing. It doesn’t prevent you from measuring variations.

As another example, think about how a geolocation app works. Let’s say Google Maps. It’s not perfect at all, but it’s extremely practical and helpful.

Photo By Pixel-Shot Adobe Stock

2. Not all risks are equal

You may have heard this before:

“You have a bigger risk of drowning in a swimming pool than being killed by a pandemic”

This claim is wrong. Not all risks are equal. Why? First, we need to understand the difference between Mediocristan and Extremistan scenarios.

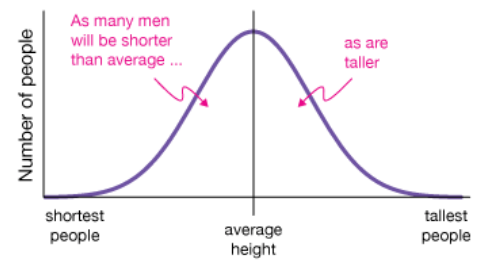

Mediocristan scenario: Randomly select two people. Assume their combined height is 3.9 meters. What’s the most likely combination? 1.95m each? 2.3m and 1.6m?

The variations will be restricted to the physical inequality limits of human height. For instance, it won’t be 3.7m and 0.2m. The probabilities are easier to calculate, and the systemic impact is limited. Gaussian (normal) distribution and averages work here.

Extremistan scenario: Randomly select two people. Assume their combined wealth is $100 billion. What’s the most likely combination? $50 billion each?

The number of variations will almost be limitless, and there is a big chance the inequality between the wealth of these two random people will be exponential. In this scenario, inequalities are such that one single observation can disproportionately impact the aggregate or the total.

If this still looks confusing, try this: If you go to the moon, come back a few years later, and find out that humanity has been wiped off the face of the earth, which one would be a more likely reason?

A) Swimming pool drownings

B) A pandemic

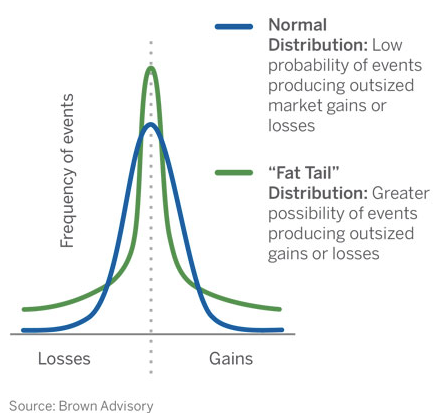

Swimming pool drownings are Mediocristan, the risk is predictable and additive. Pandemics are Extremistan, the risk is unpredictable and multiplicative. If we use the risk management and prediction tools of Mediocristan in the domain of Extremistan, we can fall into the trap of fake empiricism and face enormous surprises that have impacts beyond what we are prepared for.

According to Nassim Nicholas Taleb, a mathematical statistician and an option trader, it’s crucial to figure out which condition we are dealing with before taking a risk. Relying on averages in an Extremistan condition can be very dangerous: “Never cross a river if the only information you have is that it is on average four feet deep”

3. Favour the risks that benefit from uncertainty

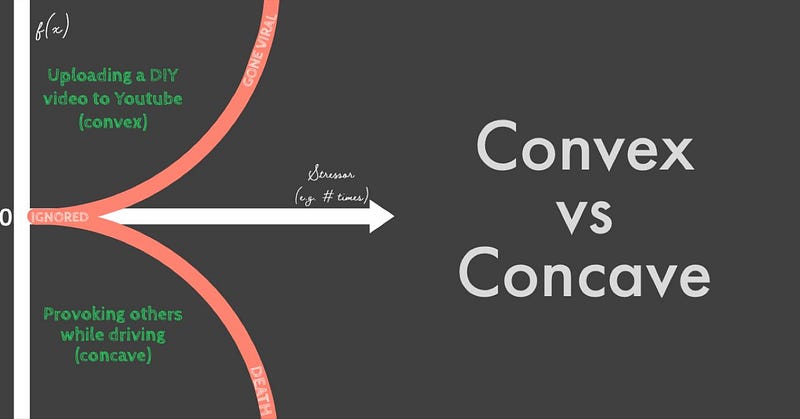

If you upload a helpful DIY (Do It Yourself) video to YouTube, the worst thing that could happen is that it will be ignored, assuming you are not uploading illegal or extremely insensitive content. But if things go very well, even if it is a very low probability, it could make you famous and perhaps open paths to getting rich. So that’s a desirable risk (convex)⁵. Head you win, tail you don’t lose.

On the other side of the coin, if you provoke other motorists while driving, the best outcome that you likely get is being ignored. But if things go south, you could be killed in road rage. This is not a desirable risk (concave).

If convex risks pay off, you gain a lot. If they fail, you lose nothing or only a little. Concave risks are the opposite.

Basically, if something gets better through stressors, uncertainty and variety, it’s a good candidate as a risk-taking and experimenting option.⁴

4. Skin in the game

Traditional organisations with hierarchical and bureaucratic structures are designed to create a distance between the decision-makers and the risks of their decisions. This method functions almost like insurance for the decision-maker. It transfers the risks of their decisions to some legal documents.

Although it would be irresponsible to say this is universally good or bad since there are more scenarios in the world than one can know about, it shouldn’t be too difficult to figure out how this might impact people’s evaluation of “risk-taking” and “decision-making” in the organisation.

Think about “Losing their jobs due to not being able to complete all project deliverables on the Gantt chart” as the perceived risk for the managers in such an environment, whilst the actual risk for the organisation can be e.g. “Losing competitive advantage and market value as a company due to lack of quick experimentation on new ideas”.

One problem with this approach is that the managers will care more about the former if they are contractually accountable for the “perceived risk”. In this case, they do not have skin in the actual game the organisation hired those managers for.

This is like a pilot flying a plane full of passengers via a remote controller. Would you get on this plane if the pilot is not in it?

I doubt.

Photo By DC Studio Adobe Stock

How can Scrum help in these areas?

- Scrum encourages people to use heuristics and helps them focus on what’s possible. Each Scrum event is designed to promote slightly better decision-making and risk-taking. Because figuring out the perfect decision can be too expensive and impractical. In knowledge work, perfect is usually an illusion that we may find easy to believe existed retrospectively.

- Time-boxing works as an enabling constraint to embed a practical approach in the process. Thinking is helpful, and trial & error is a must.

- Definition of Done creates a boundary to absorb Extremistan risks which can create ruins for the business. When this commitment that provides the minimum quality measures required for the product is ignored, the risk exposure can become immeasurable.

- The Scrum Team’s structure, a self-managed and cross-functional group of professionals who work on a shared goal, is a scientifically (not only with social sciences but also with natural sciences) proven way⁵ to deal with uncertainty, risk and generate a sufficient number of responses to match the different scenarios we might face in today’s complex world.

- Scrum has clear accountabilities around instilling quality, maximising value and using the framework effectively. However, the entire Scrum Team is accountable for creating a valuable and useful outcome every Sprint. This dynamic creates transparency around what it takes to be a team member and works like a filter for the people who are ready to give priority to the collective benefit.

Scrum is built on⁶ the premise that “knowledge comes from experience and making decisions based on what is observed”. And regardless of what they are called, any iterative & incremental framework, including Scrum, is fundamentally designed to provide a common benefit produced by this approach. It’s all about increasing the number of evidence and decreasing the number of assumptions in the system.

However, this is not a binary game. We will almost never possess all the theoretical information available about any subject in the world so we can make perfect decisions.

The knowledge is grounded in practice. The benefits of this attitude are beyond managing the risks that have already been taken. It’s more about learning how to take better risks.

Scrum can help with it.

Feel free to share your comments and insights with me. I am always up for grabbing a virtual coffee: sahin@vaxmo.co.uk

Notes:

1. Logistic map, Chaos and the logistic map, https://en.wikipedia.org/wiki/Logistic_map

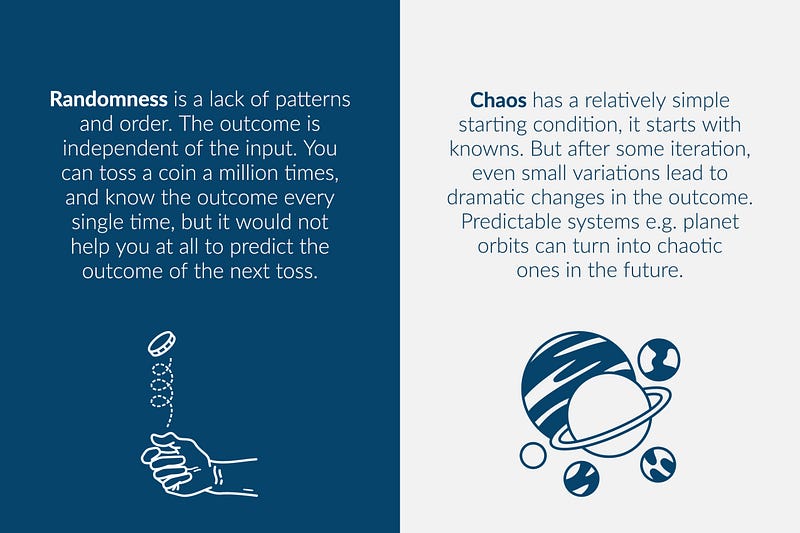

Mind the difference between “random” and “chaotic”

2. Ludic Fallacy, https://en.wikipedia.org/wiki/Ludic_fallacy

3. Explanation of Bayesian probability with a real-life example: How To Update Your Beliefs Systematically — Bayes’ Theorem

4. APA. Taleb, N. N. (2013). Antifragile. Penguin Books, https://en.wikipedia.org/wiki/Antifragility

5. Prof. Yaneer Bar-Yam, Stop Looking For Leaders, Start Looking For Teammates, 2022 & Why teams?, New England Complex Systems Institute (January 29, 2017).

6. The 2020 Scrum Guide, Scrum Theory, https://scrumguides.org/scrum-guide.html#scrum-theory